The promise was simple: give every physician a digital shadow. As burnout rates among U.S. clinicians continue to hover at crisis levels, the healthcare technology industry has pivoted toward a new savior: the AI ambient scribe. These tools, now deployed at major institutions from the Mayo Clinic to Kaiser Permanente, promise to listen to patient encounters and magically distill them into perfect clinical notes.

At US Healthcare Today, we have monitored the rapid adoption of these technologies with a mix of interest and skepticism. While the marketing suggests that AI scribes "give time back to doctors," the operational reality is more complex. We are seeing a shift in the nature of administrative work rather than its elimination. The AI scribe is not a magic wand; it is a new, sophisticated administrative layer that often forces clinicians into the role of high-stakes proofreaders, potentially moving the burnout goalposts rather than removing them.

The Shift from Creator to Editor

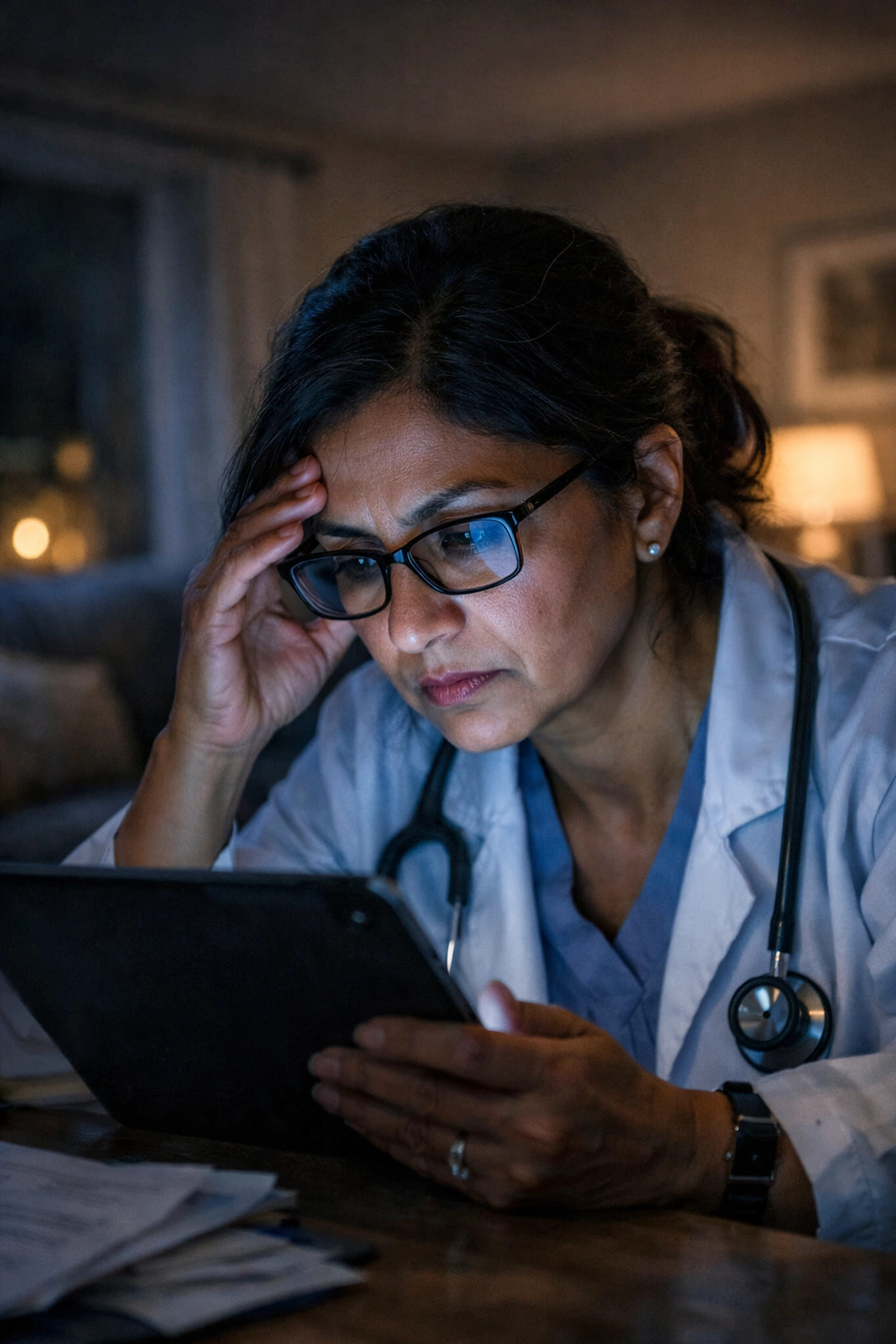

The primary marketing hook for AI scribes is the reduction of "pajama time": those late hours clinicians spend finishing charts at home. By transcribing conversations in real time and utilizing Large Language Models (LLMs) to generate clinical summaries, these tools aim to automate the most tedious parts of the job.

However, we must address the "review and edit" burden. In the U.S. healthcare system, a clinical note is not just a memo; it is a legal document, a billing trigger, and a clinical roadmap. Because AI is prone to "hallucinations": generating confident but incorrect assertions: the burden of accuracy remains entirely on the clinician. Instead of synthesizing their own thoughts, doctors are now required to painstakingly audit AI-generated text for subtle errors that could have significant patient safety or legal consequences.

We believe that this "editor-in-chief" role represents a different kind of cognitive load. Scanning an AI-generated paragraph for a missing "not" or an incorrectly transcribed medication dosage requires a level of hyper-vigilance that can be just as exhausting as writing the note from scratch. When a clinician writes their own note, they are processing their clinical reasoning. When they edit an AI’s note, they are performing quality assurance for a machine.

The Regulatory and Billing Gauntlet

In the context of healthcare finance and cost control, the stakes for documentation are incredibly high. The U.S. system operates on complex CPT (Current Procedural Terminology) and ICD-10 codes. If an AI scribe fails to capture the specific "bullets" required for a certain billing level, or if it mischaracterizes a patient’s history, the financial impact on a practice can be devastating.

Under HIPAA and other regulatory frameworks, the physician’s signature at the bottom of the note signifies that they have verified every word. If an AI misses a nuance: such as a patient saying they "stopped taking" a medication versus "started taking" it: and the physician misses that error during their "efficient" review, the liability remains with the human. We argue that this creates a paradox: to be truly safe and compliant, the physician must review the AI’s work so thoroughly that the promised "efficiency gains" begin to evaporate.

The Erosion of Clinical Synthesis

There is a deeper, more subtle risk to the AI scribe mirage: the loss of the cognitive synthesis that occurs during the act of documentation. Research indicates that when clinicians summarize a patient visit, they aren't just recording data; they are actively processing the case. They are prioritizing symptoms, weighing differential diagnoses, and testing hypotheses.

By outsourcing this process to an ambient listener, we risk turning clinicians into passive observers of their own encounters. The act of writing is often the act of thinking. If the AI does the "thinking" for the clinician, we must ask what happens to the quality of clinical reasoning over time. If a doctor is simply nodding along to an AI-generated summary, are they as engaged with the patient’s diagnostic puzzle as they would be if they had to construct that narrative themselves?

The Economics of Shifting Burdens

From a healthcare leadership perspective, AI scribes are often viewed through the lens of hospital margins. If a tool can theoretically save a doctor 10 minutes per patient, the immediate institutional response is often to fill those 10 minutes with more patients.

This is where the burnout goalposts are truly moved. If the "efficiency" gained by AI is immediately consumed by increased patient volume, the clinician is left with no net reduction in stress. In fact, they may find themselves seeing more patients while also managing a growing queue of AI-generated drafts that need auditing. We view this not as a solution to burnout, but as a method for increasing the "throughput" of an already exhausted workforce.

The digital transformation of the clinic must be measured by the well-being of the provider, not just the word count of the EHR. If the goal is truly to reduce burden, health systems must ensure that AI tools don't just become another whip to increase productivity at the expense of mental health.

A New Kind of Documentation Bias

We must also consider the bias inherent in AI models trained on specific types of clinical language. U.S. healthcare is diverse, but LLMs often reflect the dominant linguistic patterns of their training data. For clinicians working with non-native English speakers or patients with complex socio-economic backgrounds, the AI scribe may struggle to accurately capture the nuances of the encounter.

When the AI fails to understand a patient’s dialect or cultural context, the clinician must spend even more time rewriting the note to ensure it accurately reflects the patient’s reality. This creates a "documentation tax" on clinicians who serve marginalized populations, further complicating the healthcare incentives for equitable care.

Beyond the Mirage: What Real Progress Looks Like

We recognize that AI scribes are here to stay, and for some, they provide a much-needed relief from the keyboard. However, we believe it is critical to call out the "mirage" of effortless documentation. To truly address burnout, we need to look beyond the "review and edit" layer and address the systemic reasons why documentation is so burdensome in the first place.

- Simplifying Billing Requirements: Much of the documentation burden is driven by the need to justify billing codes rather than clinical necessity. Until we address the underlying healthcare economics, AI will just be a tool for navigating a broken system.

- Redesigning the EHR: Instead of building "wrappers" like AI scribes around failing EHR systems, we need an healthcare IT strategy that prioritizes intuitive, clinical-first interfaces.

- Human-Centered Implementation: AI should be an option, not a mandate. Clinicians should have the autonomy to decide which parts of their workflow are aided by AI and which require their direct manual synthesis.

Conclusion: Transparency in the AI Era

At US Healthcare Today, our commitment is to provide a transparent look at the technologies shaping our industry. The AI scribe is a powerful tool, but it is not a cure-all. We must be wary of any solution that claims to eliminate work while actually just transforming it into a different, perhaps more insidious, form of labor.

As we continue to cover the digital transformation of medicine, we will remain critical of "efficiency gains" that don't translate into a better life for those on the front lines. The goal of healthcare technology should be to support the human connection between doctor and patient, not to turn the doctor into a quality-assurance editor for a machine.

For more updates on the intersection of technology and clinician welfare, you can follow our ongoing coverage in our standard blog or browse our tag/ai-in-healthcare section for deep dives into specific implementations. The mirage may be convincing, but we are here to help you see the reality beneath the surface.

Leave a Reply