It is March 2026, and the honeymoon phase of healthcare AI is officially over. For the past few years, hospital boardrooms have been intoxicated by the promise of "autonomous hospitals" and "AI-driven diagnostics." Billions of dollars in venture capital and internal budgets have been poured into digital transformation. Yet, as we look at the landscape of digital health trends, the results are often underwhelming, if not outright dangerous.

At US Healthcare Today, we have observed a recurring pattern of failure. Most AI programs don't fail because the technology is broken; they fail because the implementation strategy is fundamentally flawed. We are seeing a "pilot purgatory" where expensive software sits unused or, worse, creates new layers of hospital administration challenges.

If you are an executive or an investor, you need to stop chasing the "shiny object" and start addressing the systemic rot in how AI is being deployed. Here are the seven critical mistakes currently plagueing healthcare AI implementation and the no-nonsense fixes required to save your ROI.

1. Viewing AI as a Replacement for Human Labor

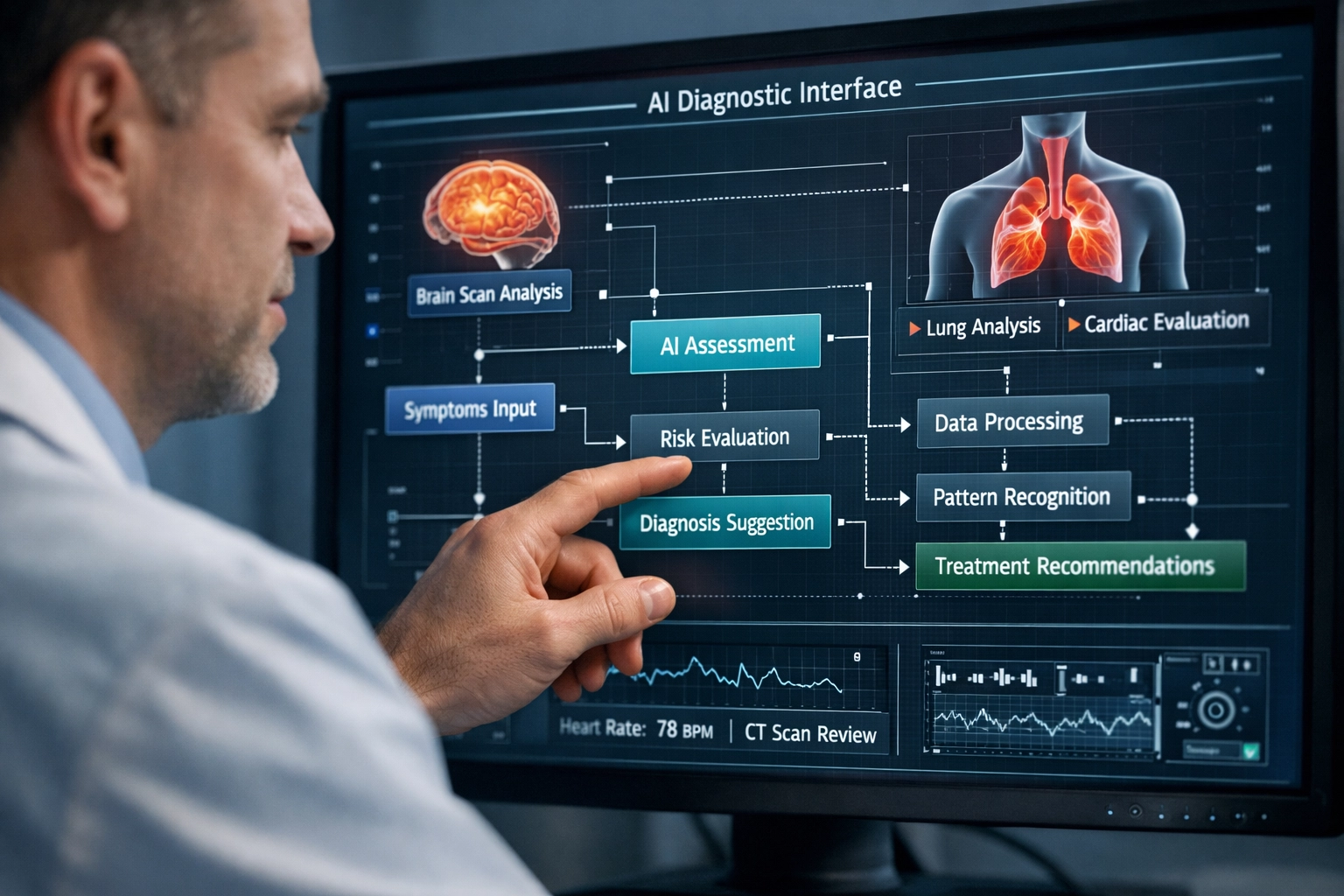

The most pervasive: and expensive: delusion in healthcare today is the idea that AI will allow us to significantly reduce headcount in clinical settings. We see administrators looking at AI scribes or diagnostic tools as a way to "solve" the labor crisis by replacing doctors and nurses.

This is a fundamental misunderstanding of clinical workflow. AI lacks the nuanced understanding, empathy, and holistic clinical judgment that defines high-quality care. When we attempt to automate clinical decision-making fully, we don't just lose the "human touch"; we lose the safety net that prevents catastrophic errors.

The Fix: We must pivot from "replacement" to "augmentation." Use AI to handle the administrative drudgery that leads to burnout: the charting, the coding, and the prior auth compliance data gathering: so that clinicians can actually practice medicine. The value of AI lies in its ability to extend a provider’s reach, not to take their chair.

2. Rushing Into Full Automation Without Rigorous Validation

The pressure to show progress in digital transformation in healthcare often leads to premature scaling. We see organizations deploying AI tools across entire departments before they have been validated in the specific, messy reality of their own patient populations.

The "black box" nature of many modern algorithms means they can work perfectly in a lab but fail spectacularly when faced with real-world data drift. In 2026, we are still seeing AI tools that make life-or-death predictions based on incomplete or "dirty" data from legacy EHRs.

The Fix: Adopt a phased, evidence-based approach. Every AI deployment should start with a "shadow mode" period where the AI makes predictions but does not influence care. Compare its output against human experts. If the delta is too wide, the tool isn't ready. As we have noted previously, why most healthcare AI programs don't fail, they're quietly shut down is often due to this lack of early-stage validation.

3. Ignoring the "Black Box" Transparency Crisis

We are increasingly seeing hospitals purchase proprietary AI models that offer zero transparency into how they arrive at a recommendation. When a physician asks, "Why is the AI suggesting this patient is at risk for sepsis?" and the answer is "Because the algorithm said so," trust is instantly incinerated.

In an industry governed by liability and ethics, "because the algorithm said so" is an unacceptable clinical or legal defense. Operating "black box" systems without explainability is a massive liability for any healthcare institution.

The Fix: Prioritize explainable AI (XAI). We must demand that vendors provide models that highlight the specific clinical markers driving a decision. Transparency allows healthcare providers to verify recommendations, build trust with the system, and ultimately provide better patient care. If a vendor cannot explain their logic, they shouldn't be in your tech stack.

4. Failing to Audit for Algorithmic Bias

US healthcare system problems are often baked into the data itself. If your AI is trained on historical data that reflects existing disparities in care, the AI will not only replicate those biases: it will accelerate them.

We’ve seen algorithms that under-diagnose conditions in minority populations because the training data was skewed toward wealthier, white demographics. When these tools are used to allocate resources or predict risk, they become engines of systemic inequality.

The Fix: Implementation must include a rigorous bias audit. We need to ensure that training data is representative of the actual population your hospital serves. This isn't just about ethics; it's about clinical accuracy and legal compliance. Regular, ongoing monitoring for "algorithmic drift" is mandatory to ensure the tool remains equitable over time.

5. Neglecting the "Tech Debt" and Data Security Gap

Many executives view AI as a standalone layer that sits on top of their existing infrastructure. In reality, AI is only as good as the data pipelines feeding it. We see organizations trying to run sophisticated 2026 AI on 2015 data infrastructure, resulting in massive "tech debt."

Furthermore, the privacy risks are escalating. Large Language Models (LLMs) and predictive analytics require massive amounts of sensitive patient data. Each new AI integration is a potential new door for a cyberattack.

The Fix: Treat AI implementation as an infrastructure project, not just a software purchase. We must invest in standardizing data formats and cleaning legacy databases before the AI goes live. Robust security protocols and strict data governance policies are non-negotiable. For a deeper look at these systemic issues, visit our category sitemap for resources on data privacy.

6. Poor Integration into Clinical Workflows

The greatest AI in the world is worthless if it adds five minutes to a nurse’s workflow. We see a recurring mistake where AI "solutions" are delivered through separate portals or disconnected dashboards. Clinicians are already suffering from "click fatigue"; asking them to log into another tool is a recipe for non-adoption.

The 95% failure rate of AI pilots is rarely due to the math; it’s due to the friction. If the AI doesn’t live inside the EHR or the natural workflow of the clinician, it will be ignored.

The Fix: Foster radical collaboration between IT leaders and clinical staff. Before a single line of code is deployed, we must map out the existing workflow and identify the exact "insertion point" for the AI. Integration must be seamless. If it isn't making the clinician’s life easier, it isn't a solution.

7. Over-Reliance on Predictive Analytics Without a Plan

There is a growing trend of "predictive guesswork" disguised as healthcare IT strategy. Hospitals are deploying AI to predict everything from patient no-shows to readmission risks, but they have no actual plan for what to do with that information.

Knowing a patient is at high risk for readmission is only valuable if you have the staff and the workflow to intervene. Without an action plan, predictive analytics just creates "alert fatigue" and increases the mental load on already stressed administrators.

The Fix: Every predictive AI tool must be paired with an operational protocol. If the AI flags a risk, what is the specific, documented intervention? We must focus on actionable insights rather than just "data for the sake of data." In health tech investing, the ROI isn't in the prediction; it's in the prevention.

The Bottom Line

The US healthcare system is often criticized for being "broken," but as we’ve argued before, it is operating exactly as designed. The current rush to AI is frequently just a way to squeeze more efficiency out of a flawed model rather than fixing the underlying issues.

To succeed in 2026, healthcare leaders must move past the marketing hype. Stop treating AI as a magic wand and start treating it as a complex, high-stakes piece of medical equipment. It requires calibration, maintenance, human oversight, and a deep understanding of the clinical environment.

If you are struggling with your digital transformation, you are not alone. But continuing down the path of poorly planned automation is a guaranteed way to burn capital and lose clinical trust.

For more critical analysis on the intersection of technology and policy, explore our masonry blog or reach out via our contact forms to join the conversation. The future of healthcare isn't just about having the best AI; it's about having the best strategy for using it.

Leave a Reply